Organisations are racing to adopt Generative AI—but many are doing so faster than their controls can keep up.

When AI systems hallucinate, expose sensitive data, or automate decisions at scale, the real question becomes: is your organisation ready to govern AI, or merely reacting to it?

Understanding why governance matters is only the first step. Without it, organisations expose themselves to a new class of AI-driven risks—many of which traditional controls were never designed to handle. Without Governance, the systems would not have any controls, structure, and guardrails to prevent unauthorized use and preserve secure access for the right people.

In this blog, we will discuss and explore Governance in AI and take it a level further by diving into Governance Readiness and how to implement it simply. In another blog, we will also explore the Risks related to AI and explore them in detail.

Need of Governance in Generative AI

In many of my discussions with organisations, Generative AI is considered an Enabler or Differentiator and hence eager to implement, organisations put AI first, and second to none the Governance of the solution. It should be consideree the most important part – Governance in and through the solution.

So let’s discuss why is Governance in AI important and how to prepare proactively

Why Governance – AI is moving faster than Controls

The main reason why this is important now is because of below reasons:

- Rapid adoption of LLMs and automation outpaces traditional governance

- New behaviours (hallucinations, bias, adversarial prompts) create unfamiliar risk classes

- Organisations must balance innovation with trust, safety, and compliance

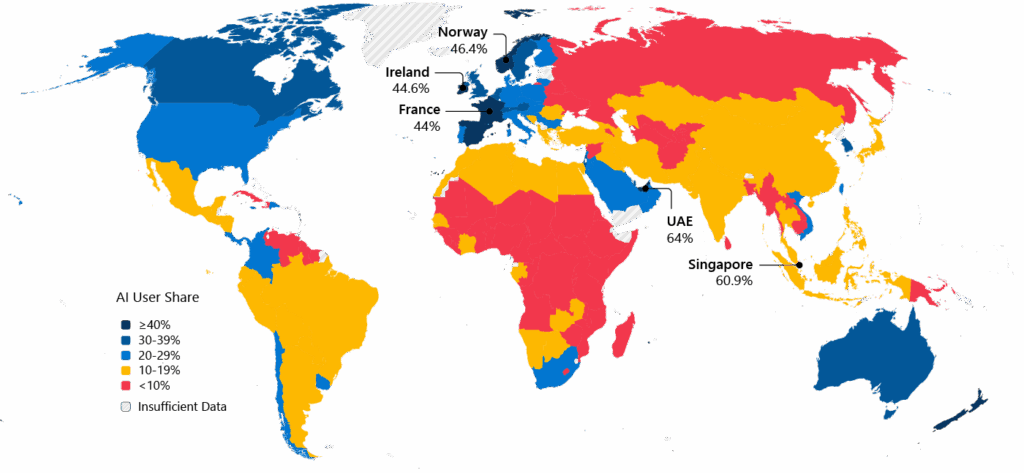

Below is a quick spread of AI growth in each of the countries (courtesy: Microsoft Global AI diffusion 20025 report) and we can see a lot more countries are over 15% usage of AI compared to earlier years and growing. Hence, organisations building on AI need to be careful too.

Where Governance – Key Risks from lack of Governance

In absence of governance, there are major risks that can impact not only the AI systems but also the systems that work with the AI solutions.

We will look at risks in detail in another blog but below are some of the risks to the systems with AI or using AI.

- Unpredictable Outputs: Confident but incorrect or harmful responses → can lead to inaccurate customer advice or flawed executive decisions.

- Adversarial Threats: Prompt injection, jailbreaks, misuse at scale → can compromise organisational confidentiality and IP

- Data Exposure: Leakage of sensitive or proprietary information → may breach privacy laws and damage organisational trust

- Systemic Dependencies: Automation embedded deeply into workflows → may lead to concurrent failures that traverse through the whole organisation

- Governance Gaps: Shadow AI, unclear accountability, regulatory pressure → uncontrolled processes and gaps can lead to unmonitored risks

Below we will explore practical strategies to address key governance gaps and risks, focusing on the most impactful solutions rather than attempting to cover every viable option.

How – Implementing AI Governance and Readiness

Governance readiness means designing AI systems with controls, accountability, and risk awareness built in—before incidents force corrective action.

The best way to implement effective AI governance, is through readiness of systems and controls instead of being reactive through events that require attention. In other words, understanding the risk profile of the organisation and planning for AI is more effective ways of Governance readiness than waiting for risk events to define the organisation’s position on governance.

Below are some ways to implement Governance readiness in solutions.

- Deploy technical safeguards: prompt shields, monitoring, adversarial testing

- Establish AI governance frameworks across teams and workflows

- Integrate risk thinking into strategy, architecture, and operations

Note: For this blog we are going to use Microsoft Foundry as a reference, but similar settings are also available through other clouds and Open source

Technical Safeguards

Technical safeguards translate governance principles into enforceable controls. They ensure AI systems operate within defined ethical, security, and compliance boundaries—at scale. Below are some procedures to safeguard your AI solution using guardrails and monitor them.

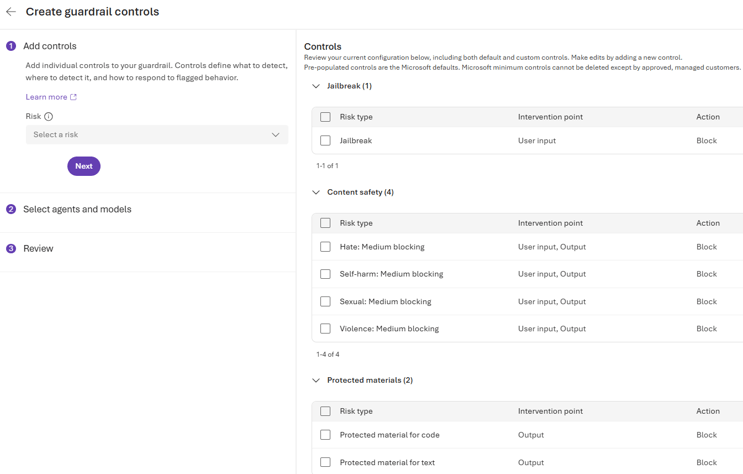

- Guardrails

- Guardrails guide AI behavior by setting boundaries to ensure ethical and safe output generation.

- Variations in API configurations and application design might affect completions and thus filtering behavior. Risks are flagged by classification models designed to detect harmful content.

- Link: Guardrails and Controls

- Prompt Shields

- Prompt shields prevent AI from generating harmful or unsafe responses by filtering input prompts effectively.

- Link: Azure Foundry – Prompt Shield (Jailbreak protection)

- Sandboxing

- Sandboxing isolates AI models in controlled environments for safer testing and deployment of new algorithms.

Example of controls that can be implemented through Guardrails using Microsoft Foundry (as on Jan 2026)*.

Monitoring and Tracing

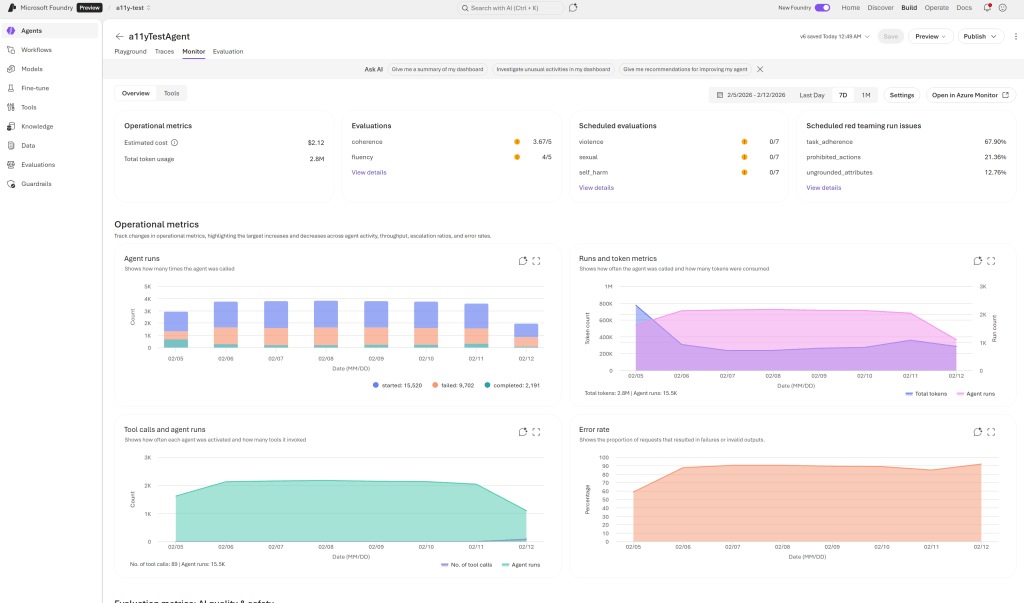

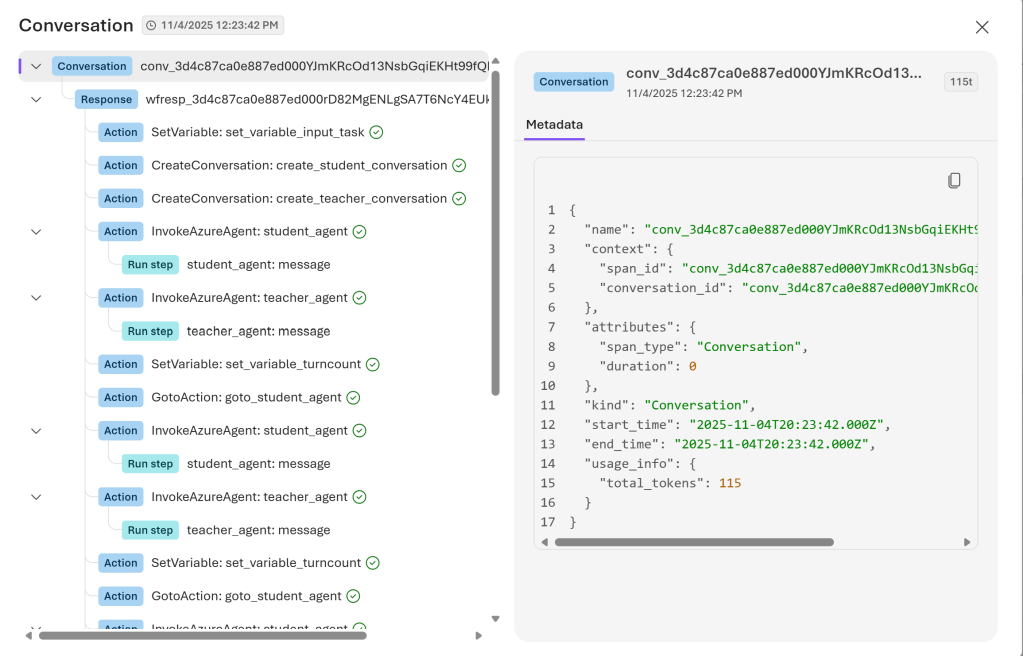

Monitoring: Use Monitor Dashboard in Microsoft Foundry to track operational metrics and evaluation results for your agents. This dashboard helps you understand token usage, latency, success rates, and evaluation outcomes for production traffic.

Tracing: Microsoft Foundry provides an observability platform for monitoring and tracing AI agents. It captures key details during an agent run, such as inputs, outputs, tool usage, retries, latencies, and costs.

AI Governance Frameworks

There are various Governance frameworks like below to assist with implementation and control of AI at a Standard level to help organisations adopt and use AI effectively. Some of the Regional and Global Standards are EU AI Act, ISO/IEC 42001:2023, ISO/IEC 38507, NIST AI Risk Management Framework (AI RMF), Voluntary AI Safety Standard (Australia).

While regulatory frameworks such as the EU AI Act focus on compliance, operational frameworks like NIST AI RMF help organisations embed risk management into day-to-day AI workflows. Below are some sample frameworks and standards that are helpful in implementing and maintaining AI standards.

Microsoft Responsible AI: Microsoft has listed guidelines for Responsible use of AI and how to apply them in AI Systems

IBM AI Governance: IBM has laid down processes for AI governance and how to achieve them.

Australia National Framework for Government: Australia’s governance of AI implementation within government.

Australia’s AI Ethics Principles: The principles were part of the Australian Government’s commitment to make Australia a global leader in responsible and inclusive AI.

Conclusion

Organisations that embed governance readiness early will move faster, innovate responsibly, and earn trust at scale.

Those that delay will find themselves governed by incidents, not intent.

Governance is key to successful AI implementation minimizing risk and improving adoption within any organisation and country. So to reiterate below are some ways we can effectively apply governance and manage risks.

- Proactive Risk Readiness

- Emphasizing proactive measures is essential for managing risks in emerging AI technologies effectively.

- Governance and Safeguards

- Combining governance frameworks with technical safeguards ensures responsible AI development and deployment.

- Strategic Resilience

- Building strategic resilience helps organizations navigate AI risks and maintain trustworthy systems over time.

In upcoming blogs, we will detail on various aspects of Governance and Risk in detail.

Leave a comment